The concepts learned through creating this program are essential for learning Python, making this the perfect project to practise and apply these skills. In this tutorial, we will be building a web scraper in Python to aggregate data from the top five soccer leagues in the world. The program searches the webpage’s HTML for specified tags or classes and selects that information to display in the program.

This program uses Python’s BeautifulSoup library to extract data from the website specified in the code. Hands on Python Web Scraping Tutorial and Example Project. We'll cover some general tips and tricks and common challenges and wrap it all up with an example project by scraping.

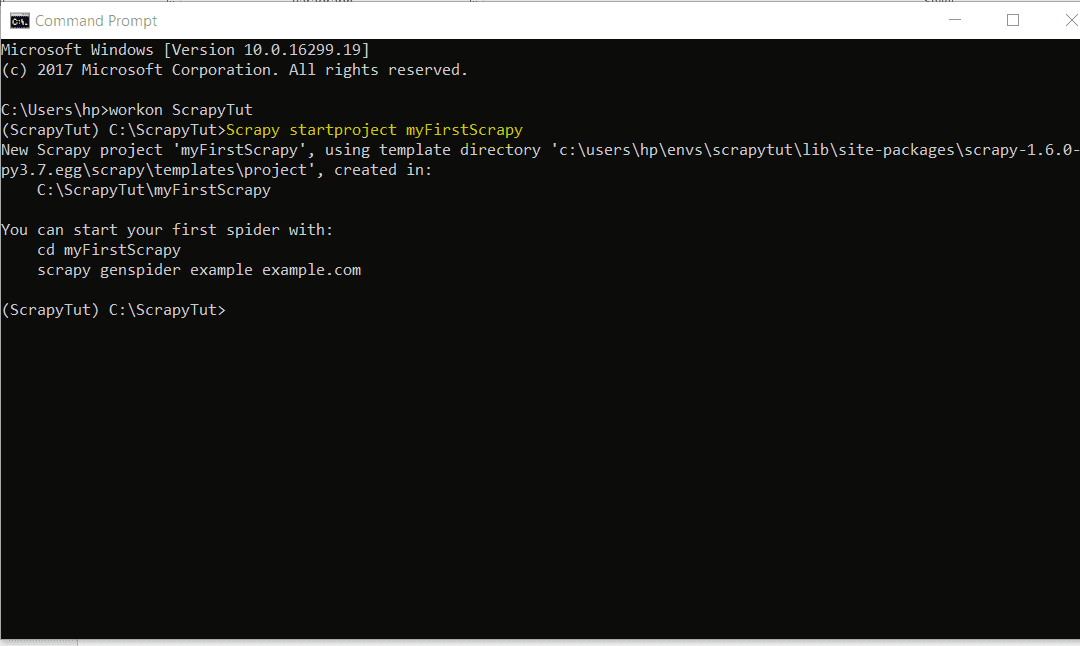

soup BeautifulSoup ( html, 'lxml') type( soup) bs4. In this Selenium with Python tutorial, we'll take a look at what Selenium is its common functions used in web scraping dynamic pages and web applications. Practice downloading multiple webpages using Aiohttp. After obtaining it as a DataFrame, it is of course possible to do various processing and. Learn how to create an asynchronous web scraper from scratch in pure python using asyncio and aiohttp. Sometimes it would be great to obtain some data from. The second argument 'lxml' is the html parser whose details you do not need to worry about at this point. Pandas makes it easy to scrape a table ( tag) on a web page. Build a web scraper with Python Step 1: Select the URLs you want to scrape Step 2: Find the HTML content you want to scrape Step 3: Choose your tools and libraries Step 4: Build your web scraper in Python Completed code Step 5: Repeat for Madewell Wrapping up and next steps Get hands-on with Python today. Web sites are written using HTML, which means that each web page is a structured document.Basic knowledge of HTML is also useful, but not necessary. The Beautiful Soup package is used to parse the html, that is, take the raw html text and break it into Python objects. Stepwise implementation: Step 1: First we will import some required modules. Before starting this project, you should already have experience printing, for loops, and using functions, as these concepts will be used throughout the project. We need to install a chrome driver to automate using selenium, our task is to create a bot that will be continuously scraping the google news website and display all the headlines every 10mins. Fortunately, the Python library googlesearch makes it easy to gather URLs in response to an initial google search. This is an intermediate-level project for those who are new to Python. First, we need a way to gather URLs relevant to the topic we are scraping data for.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed